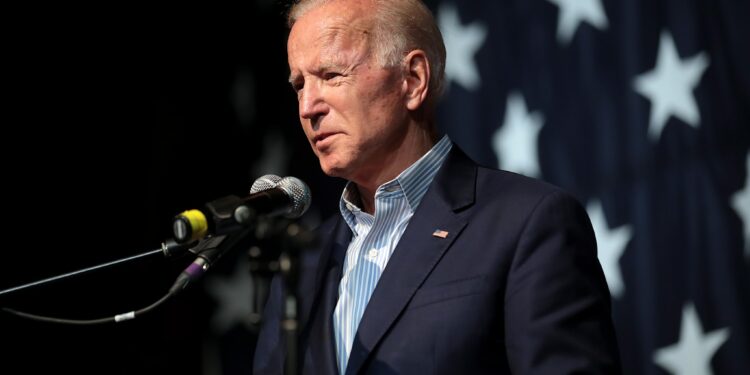

Since the beginning of President Biden’s term, there have been varying opinions about the strength of America’s position on the global stage. We’re interested in your perspective on this matter. Do you think Biden’s presidency has left America critically weak, or do you have a more positive assessment of the country’s current standing?

Yes

It has.

No

It hasn’t.